一、 写作背景

近来工作需要,预将公司现有应用服务 Docker 化,并使用 Kubernetes 进行统一资源编排,现将部署过程记录一下。

集群框架搭建完毕后,需要安装诸如 ingress dashboard Metrics 等服务,以便于可视化管理、服务暴露、集群监控等。

二、 系列文章

- 快速搭建Kubernetes高可用集群一 基础环境初始化

- 快速搭建Kubernetes高可用集群二 Kubeadm 初始化集群

- 快速搭建Kubernetes高可用集群三 Ingress、Dashboard、Metrics-server

- 快速搭建Kubernetes高可用集群四 Rook-Ceph

- 快速搭建Kubernetes高可用集群五 Harbor

- 快速搭建Kubernetes高可用集群六 Prometheus

- 快速搭建Kubernetes高可用集群七 ELK-stack

三、 前期准备

下载三个项目,获取配置文件及说明文件。

- Metrics-server 的 软件包 地址,版本:v0.3.7

- Dashboard 的 github 项目地址,版本:v2.0.4

- Ingress 的 github 项目地址,版本:ingress-nginx-2.15.0

根据配置文件指定的 image 提前下载,提高部署速度。

docker pull registry.cn-beijing.aliyuncs.com/fcu3dx/metrics-server:v0.3.7

docker pull registry.cn-beijing.aliyuncs.com/fcu3dx/nginx-ingress-controller:v0.35.0

docker pull registry.cn-beijing.aliyuncs.com/fcu3dx/kube-webhook-certgen:v1.2.2

docker pull registry.cn-beijing.aliyuncs.com/fcu3dx/dashboard:v2.0.4

docker pull registry.cn-beijing.aliyuncs.com/fcu3dx/metrics-scraper:v1.0.4

docker pull registry.cn-beijing.aliyuncs.com/fcu3dx/defaultbackend-amd64:1.5

docker tag registry.cn-beijing.aliyuncs.com/fcu3dx/metrics-server:v0.3.7 k8s.gcr.io/metrics-server/metrics-server:v0.3.7

docker tag registry.cn-beijing.aliyuncs.com/fcu3dx/nginx-ingress-controller:v0.35.0 k8s.gcr.io/ingress-nginx/controller:v0.35.0

docker tag registry.cn-beijing.aliyuncs.com/fcu3dx/kube-webhook-certgen:v1.2.2 docker.io/jettech/kube-webhook-certgen:v1.2.2

docker tag registry.cn-beijing.aliyuncs.com/fcu3dx/dashboard:v2.0.4 kubernetesui/dashboard:v2.0.4

docker tag registry.cn-beijing.aliyuncs.com/fcu3dx/metrics-scraper:v1.0.4 kubernetesui/metrics-scraper:v1.0.4

docker tag registry.cn-beijing.aliyuncs.com/fcu3dx/defaultbackend-amd64:1.5 k8s.gcr.io/defaultbackend-amd64:1.5

docker rmi registry.cn-beijing.aliyuncs.com/fcu3dx/metrics-server:v0.3.7

docker rmi registry.cn-beijing.aliyuncs.com/fcu3dx/nginx-ingress-controller:v0.35.0

docker rmi registry.cn-beijing.aliyuncs.com/fcu3dx/kube-webhook-certgen:v1.2.2

docker rmi registry.cn-beijing.aliyuncs.com/fcu3dx/dashboard:v2.0.4

docker rmi registry.cn-beijing.aliyuncs.com/fcu3dx/metrics-scraper:v1.0.4

docker rmi registry.cn-beijing.aliyuncs.com/fcu3dx/defaultbackend-amd64:1.5

四、 业务部署

4.1 metrics-server 部署

4.1.1 修改配置

在 metrics-server 目录中找到配置文件 metrics-server-deployment.yaml 在 args 项中添加几个参数

原内容:

- name: metrics-server

image: k8s.gcr.io/metrics-server/metrics-server:v0.3.7

imagePullPolicy: IfNotPresent

args:

- --cert-dir=/tmp

- --secure-port=4443

修改后内容:

- name: metrics-server

image: k8s.gcr.io/metrics-server/metrics-server:v0.3.7

imagePullPolicy: IfNotPresent

args:

- --cert-dir=/tmp

- --secure-port=4443

- --metric-resolution=30s

# kubelet 的10250端口使用的是https协议,连接需要验证tls证书。可以在metrics server启动命令添加参数--kubelet-insecure-tls不验证客户端证书

- --kubelet-insecure-tls

# metrics-server默认使用节点hostname通过kubelet 10250端口获取数据,但是coredns里面没有该数据无法解析(10.96.0.10:53),可以在metrics server启动命令添加参数 --kubelet-preferred-address-types=InternalIP 直接使用节点IP地址获取数据

- --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP

- --logtostderr

4.1.2 执行部署命令

kubectl apply -f /etc/kubernetes/gitlab/metrics-server-0.3.7/deploy/1.8+/

4.1.3 验证

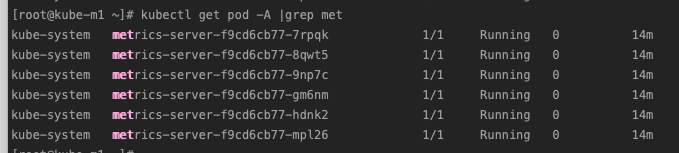

查看 POD 状态

kubectl get pod -A |grep met

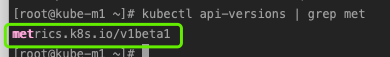

查看 API :

kubectl api-versions | grep met

验证结果:

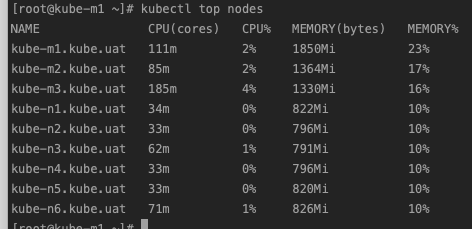

查看集群节点资源使用情况

kubectl top nodes

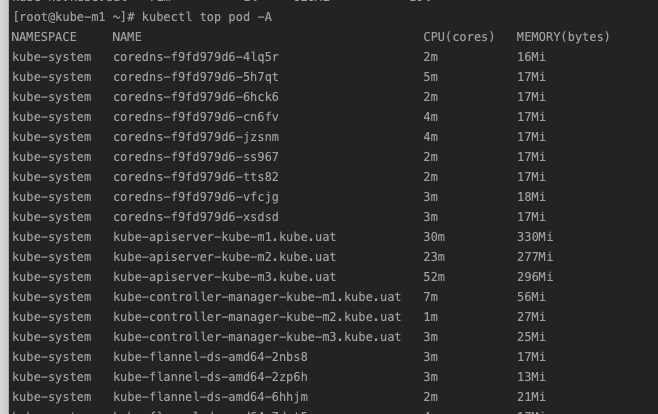

查看各 pod 使用资源状况

kubectl top pod -A

4.2 Ingress 部署

由于使用 kubectl apply 进行部署会出现一些问题,这里直接使用 helm 进行部署

在国内不容易访问 k8s.gcr.io 这个网站,所以使用前面的方法,提前下载镜像。

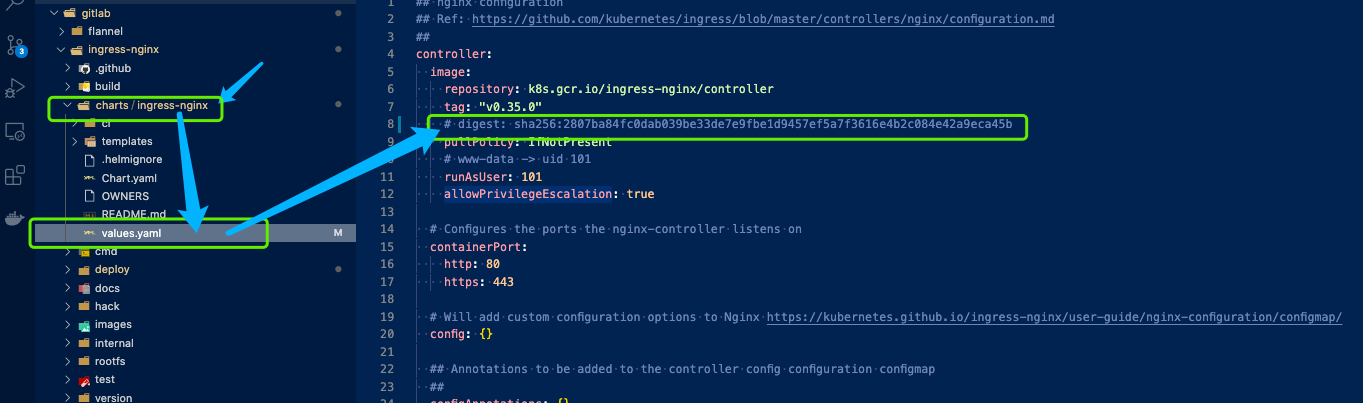

4.2.1 修改配置

因原文件中使用的镜像带有 sha256 的地址,而我们自行修改下载的镜像没有这个地址,故需修改镜像地址如图:

最后配置文件如下(我的是6个 worker 节点,故replicaCount 为 6):

controller:

image:

repository: k8s.gcr.io/ingress-nginx/controller

tag: "v0.35.0"

pullPolicy: IfNotPresent

runAsUser: 101

allowPrivilegeEscalation: true

containerPort:

http: 80

https: 443

config: {}

configAnnotations: {}

proxySetHeaders: {}

addHeaders: {}

dnsConfig: {}

dnsPolicy: ClusterFirst

reportNodeInternalIp: false

hostNetwork: true

hostPort:

enabled: true

ports:

http: 80

https: 443

electionID: ingress-controller-leader

ingressClass: nginx

podLabels: {}

podSecurityContext: {}

sysctls: {}

publishService:

enabled: true

pathOverride: ""

scope:

enabled: false

tcp:

annotations: {}

udp:

annotations: {}

extraArgs: {}

extraEnvs: []

kind: DaemonSet

annotations: {}

labels: {}

updateStrategy: {}

minReadySeconds: 0

tolerations: []

affinity: {}

terminationGracePeriodSeconds: 300

nodeSelector: {}

livenessProbe:

failureThreshold: 5

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

port: 10254

readinessProbe:

failureThreshold: 3

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

port: 10254

healthCheckPath: "/healthz"

podAnnotations: {}

replicaCount: 6

minAvailable: 1

resources:

requests:

cpu: 100m

memory: 90Mi

autoscaling:

enabled: true

minReplicas: 1

maxReplicas: 11

targetCPUUtilizationPercentage: 50

targetMemoryUtilizationPercentage: 50

autoscalingTemplate: []

enableMimalloc: false

customTemplate:

configMapName: ""

configMapKey: ""

service:

enabled: true

annotations: {}

labels: {}

externalIPs: []

loadBalancerIP: ""

loadBalancerSourceRanges: []

enableHttp: true

enableHttps: true

ports:

http: 80

https: 443

targetPorts:

http: http

https: https

type: ClusterIP

nodePorts:

http: ""

https: ""

tcp: {}

udp: {}

internal:

enabled: false

annotations: {}

extraContainers: []

extraVolumeMounts: []

extraVolumes: []

extraInitContainers: []

admissionWebhooks:

enabled: true

failurePolicy: Fail

port: 8443

service:

annotations: {}

externalIPs: []

loadBalancerSourceRanges: []

servicePort: 443

type: ClusterIP

patch:

enabled: true

image:

repository: docker.io/jettech/kube-webhook-certgen

tag: v1.2.2

pullPolicy: IfNotPresent

priorityClassName: ""

podAnnotations: {}

nodeSelector: {}

tolerations: []

runAsUser: 2000

metrics:

port: 10254

enabled: false

service:

annotations: {}

externalIPs: []

loadBalancerSourceRanges: []

servicePort: 9913

type: ClusterIP

serviceMonitor:

enabled: false

additionalLabels: {}

namespace: ""

namespaceSelector: {}

scrapeInterval: 30s

targetLabels: []

metricRelabelings: []

prometheusRule:

enabled: false

additionalLabels: {}

rules: []

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

priorityClassName: ""

revisionHistoryLimit: 10

maxmindLicenseKey: ""

defaultBackend:

enabled: true

image:

repository: k8s.gcr.io/defaultbackend-amd64

tag: "1.5"

pullPolicy: IfNotPresent

runAsUser: 65534

extraArgs: {}

serviceAccount:

create: true

name:

extraEnvs: []

port: 8080

livenessProbe:

failureThreshold: 3

initialDelaySeconds: 30

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 5

readinessProbe:

failureThreshold: 6

initialDelaySeconds: 0

periodSeconds: 5

successThreshold: 1

timeoutSeconds: 5

tolerations: []

affinity: {}

podSecurityContext: {}

podLabels: {}

nodeSelector: {}

podAnnotations: {}

replicaCount: 6

minAvailable: 1

resources: {}

service:

annotations: {}

externalIPs: []

loadBalancerSourceRanges: []

servicePort: 80

type: ClusterIP

priorityClassName: ""

rbac:

create: true

scope: false

podSecurityPolicy:

enabled: false

serviceAccount:

create: true

name:

imagePullSecrets: []

tcp: {}

udp: {}

4.2.2 执行部署

# 创建命名空间

kubectl create namespace ingress-nginx

因有多个节点,我在每个节点上都运行一个服务,使用 scale重新部署一下

helm -n ingress-nginx install kube /etc/kubernetes/gitlab/ingress-nginx/charts/ingress-nginx/ -f /etc/kubernetes/gitlab/ingress-nginx/charts/ingress-nginx/values.yaml

命令参数说明:

| 参数(命令) | 说明 |

|---|---|

| -n ingress-nginx | 指定命名空间 |

| install | 安装,还可以是 升级版本 upgrade、卸载 uninstall |

| kue | 项目前缀,用于区分,任意命名 |

| /etc/kubernetes/gitlab/ingress-nginx/charts/ingress-nginx/ | 项目所在路径,可以是 helm repo、url 等 |

| -f /etc/kubernetes/gitlab/ingress-nginx/charts/ingress-nginx/values.yaml | 指定安装配置文件 |

执行完成后,系统会给出示例,根据示例,可以使用 Ingress 服务添加更多服务暴露。

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

name: example

namespace: foo

spec:

rules:

- host: www.example.com

http:

paths:

- backend:

serviceName: exampleService

servicePort: 80

path: /

# This section is only required if TLS is to be enabled for the Ingress

tls:

- hosts:

- www.example.com

secretName: example-tls

If TLS is enabled for the Ingress, a Secret containing the certificate and key must also be provided:

apiVersion: v1

kind: Secret

metadata:

name: example-tls

namespace: foo

data:

tls.crt: <base64 encoded cert>

tls.key: <base64 encoded key>

type: kubernetes.io/tls

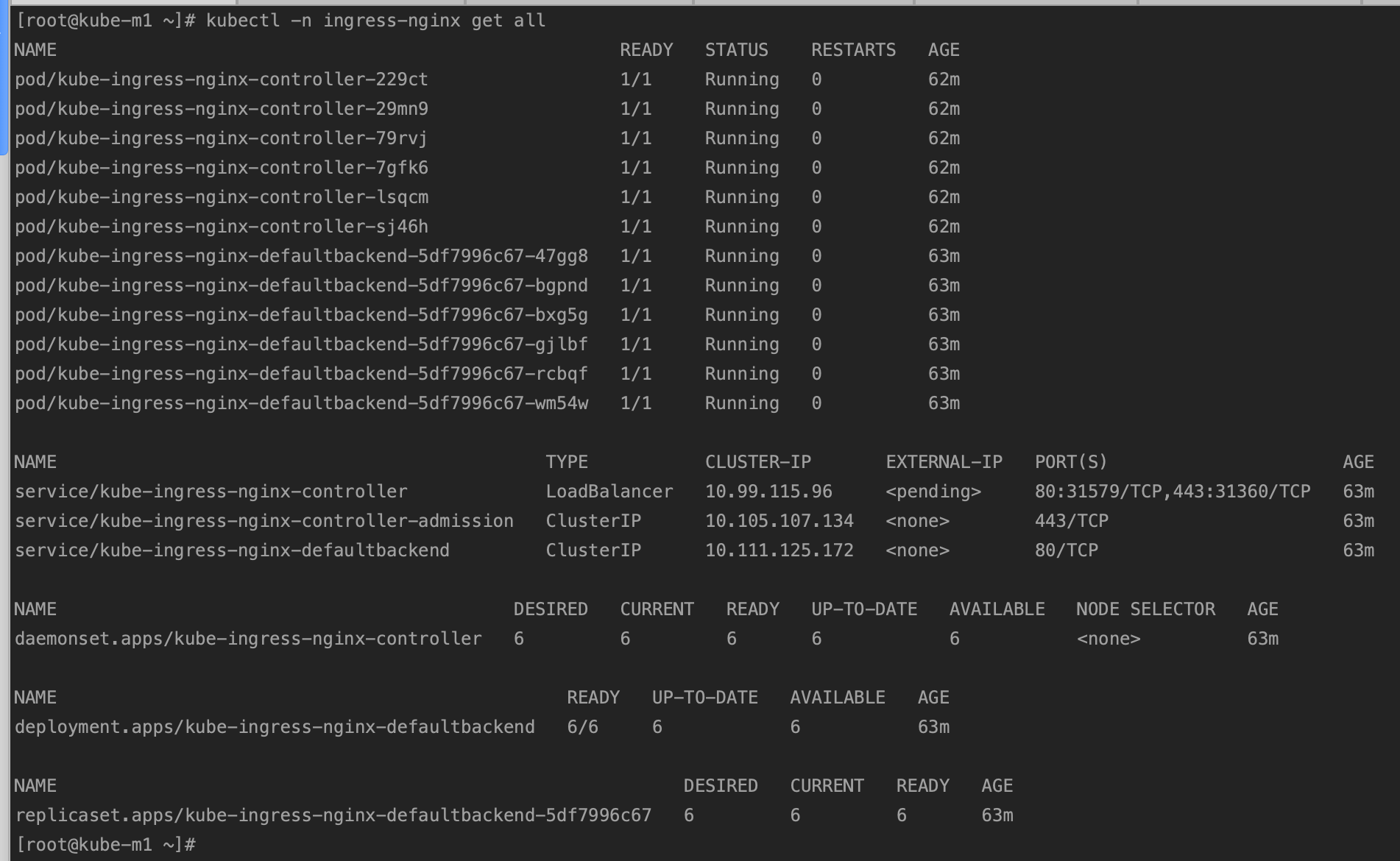

4.2.3 验证

查看 pod 运行状态

kubectl -n ingress-nginx get all

4.3 Kubernetes Dashboard 部署

4.3.1 修改配置

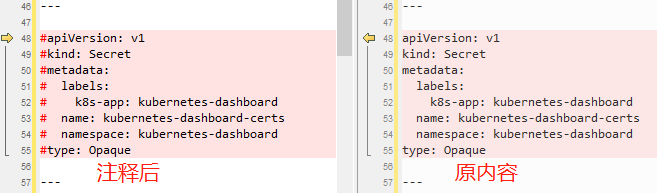

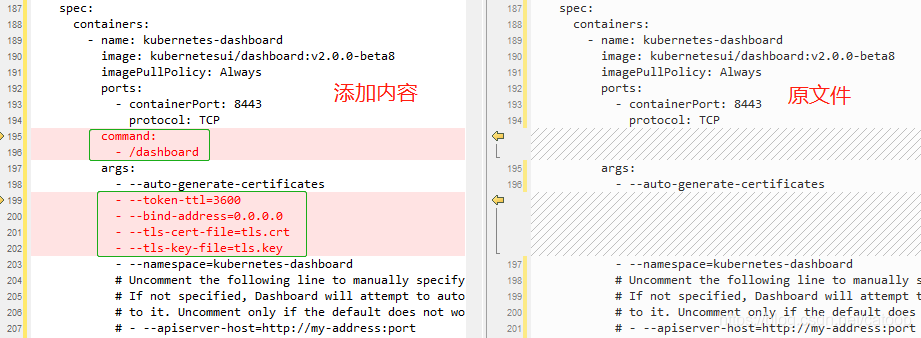

修改文件 ./aio/deploy/recommended.yaml

注释自动创建 TLS 证书,后面手动创建自己的证书。

修改容器启动参数

4.3.2 创建证书

证书为和 ETCD 的证书保持一致,这里使用 cfssl 工具创建证书。

配置 ca-config.json (sign) 文件

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

配置 kube-uat-csr.json 文件

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"172.17.0.151",

"172.17.0.152",

"172.17.0.153",

"172.17.0.154",

"172.17.0.155",

"172.17.0.156",

"172.17.0.157",

"172.17.0.158",

"172.17.0.159",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local",

"*.kube.uat",

"dashboard.kube.uat",

"harbor.kube.uat",

"ceph.kube.uat"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "Gxsk",

"OU": "Data"

}

]

}

使用 cfssl 命令生成证书,并通过管道和 cfssljson 工具输出证书文件

/usr/local/bin/cfssl gencert \

-ca=/etc/etcd/ssl/etcd-ca.pem \

-ca-key=/etc/etcd/ssl/etcd-ca-key.pem \

-config=/etc/etcd/ssl/ca-config.json \

-profile=kubernetes \

/etc/kubernetes/pki/kube-uat-csr.json | \

cfssljson -bare /etc/kubernetes/pki/kube-uat

| 参数 | 说明 |

|---|---|

| /usr/local/bin/cfssl | 可执行文件路径 |

| gencert | 生成 cert 证书 |

| -ca | CA 证书路径,这里和 ETCD使用同一个 CA 证书 |

| -ca-key | CA 证书的 Key 路径 |

| -config | CA 证书的 Sign 配置 |

| -profile | 配置段,即配置文件中 “CN” 的值 |

| /etc/etcd/ssl/ca-config.json | 要认证的域名配置文件,即kube-uat-csr.json文件 |

| cfssljson | cfssl json 生成工具 |

| -bare | 证书生成路径参数 |

| /etc/kubernetes/pki/kube-uat | 证书路径,其中最后一段 kube-uat 为证书前缀,会生成 kube-uat.pem 等类似命名的证书相关文件 |

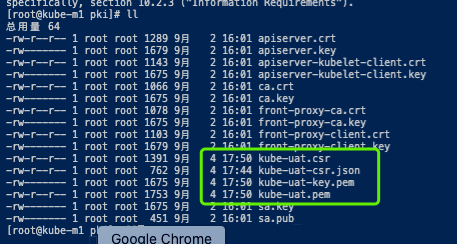

执行后生成 CERT 证书文件

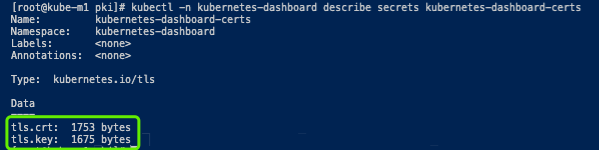

4.3.3 创建 kubernetes-dashboard-certs

上面已经注释 k8s 自动生成证书,这里手动生成,dashboard 默认使用名为 kubernetes-dashboard 的 namespace

# 创建 namespace

kubectl create namespace kubernetes-dashboard

# 进入上面生成 cert 证书的目录

cd /etc/kubernetes/pki/

# 创建 secret

kubectl -n kubernetes-dashboard create secret tls kubernetes-dashboard-certs --key kube-uat-key.pem --cert kube-uat.pem

参数说明:

| 命令或参数 | 说明 |

|---|---|

| kubectl | 命令 |

| -n kubernetes-dashboard | 指定命名空间 |

| create secret tls | 创建 tls 类型的 secret |

| kubernetes-dashboard-certs | 证书名称 |

| –key kube-uat-key.pem | 指定 key 文件 |

| –cert kube-uat.pem | 指定 cert 证书文件 |

生成证书文件如下:

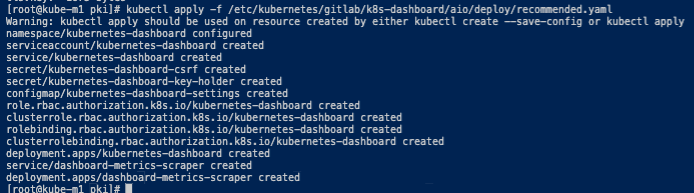

4.3.4 部署

kubectl apply -f /etc/kubernetes/gitlab/k8s-dashboard/aio/deploy/recommended.yaml

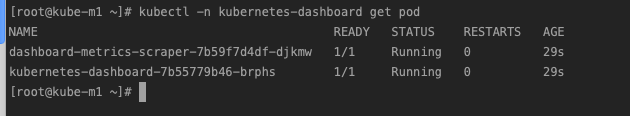

Pod 状态

4.4 配置ingress方式访问

4.4.1 Ingerss 代理配置

创建文件ingress-nginx-kubernetes-dashboard.yaml

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: ingress-nginx-kubernetes-dashboard

namespace: kubernetes-dashboard

annotations:

# 指定 Ingress Controller 的类型

kubernetes.io/ingress.class: "nginx"

# 指定我们的 rules 的 path 可以使用正则表达式

nginx.ingress.kubernetes.io/use-regex: "true"

# URL 重写

nginx.ingress.kubernetes.io/rewrite-target: /

nginx.ingress.kubernetes.io/ssl-redirect: "true"

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

# 连接超时时间,默认为 5s

nginx.ingress.kubernetes.io/proxy-connect-timeout: "600"

# 后端服务器回转数据超时时间,默认为 60s

nginx.ingress.kubernetes.io/proxy-send-timeout: "600"

# 后端服务器响应超时时间,默认为 60s

nginx.ingress.kubernetes.io/proxy-read-timeout: "600"

# 客户端上传文件,最大大小,默认为 20m

nginx.ingress.kubernetes.io/proxy-body-size: "10m"

spec:

tls:

- hosts:

- "*.k8s.test"

secretName: kubernetes-dashboard-certs

rules:

- host: dashboard.k8s.test

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: kubernetes-dashboard

port:

number: 443

4.4.2 Kubernetes 权限验证

创建配置文件kubernetes-dashboard-rbac.yaml,解决 forbidden 403 问题

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

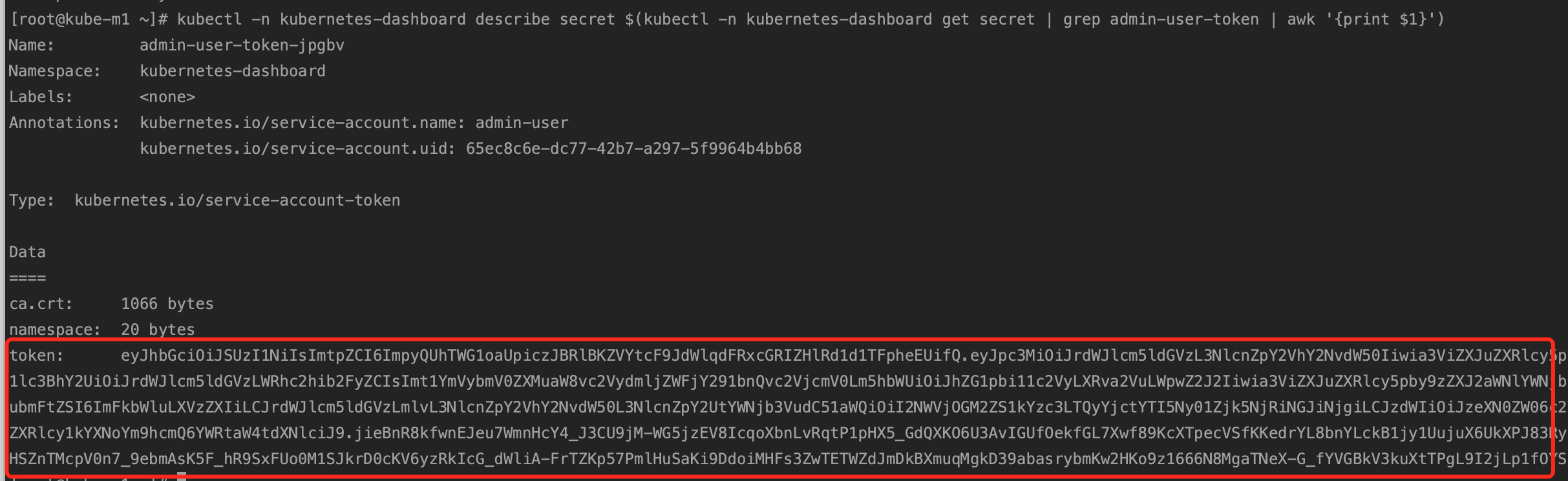

4.4.3 获取 Token

WEB系统登录有两种方式,Token 和 Kubeconfig 两种(如3.4.5中图示),这里我们使用 Token 方式访问,获取证书方式如下

kubectl -n kubernetes-dashboard describe secret $(kubectl -n kubernetes-dashboard get secret | grep admin-user-token | awk '{print $1}')

将 Token 的字符串复制下来,后面登录时要用到。

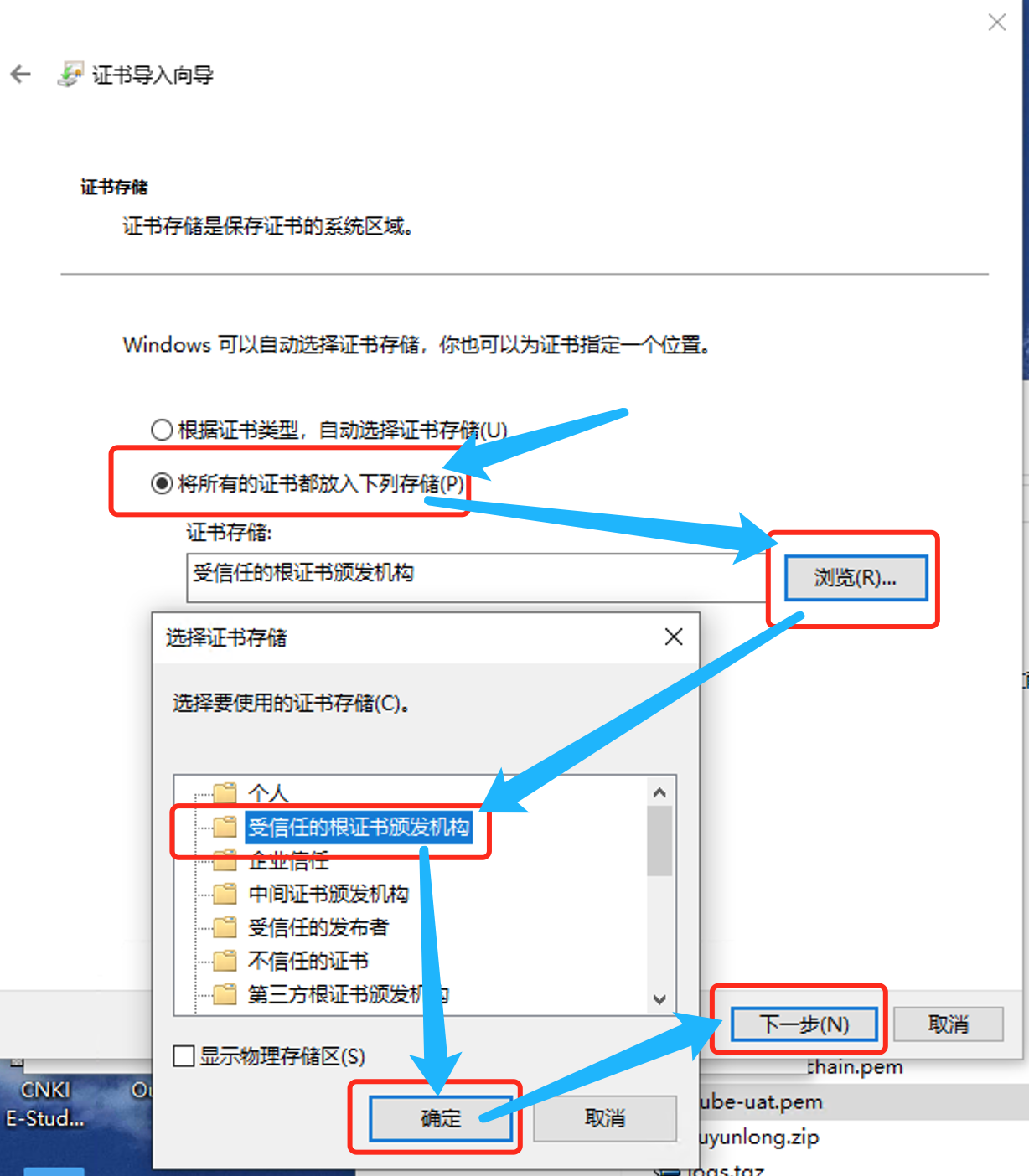

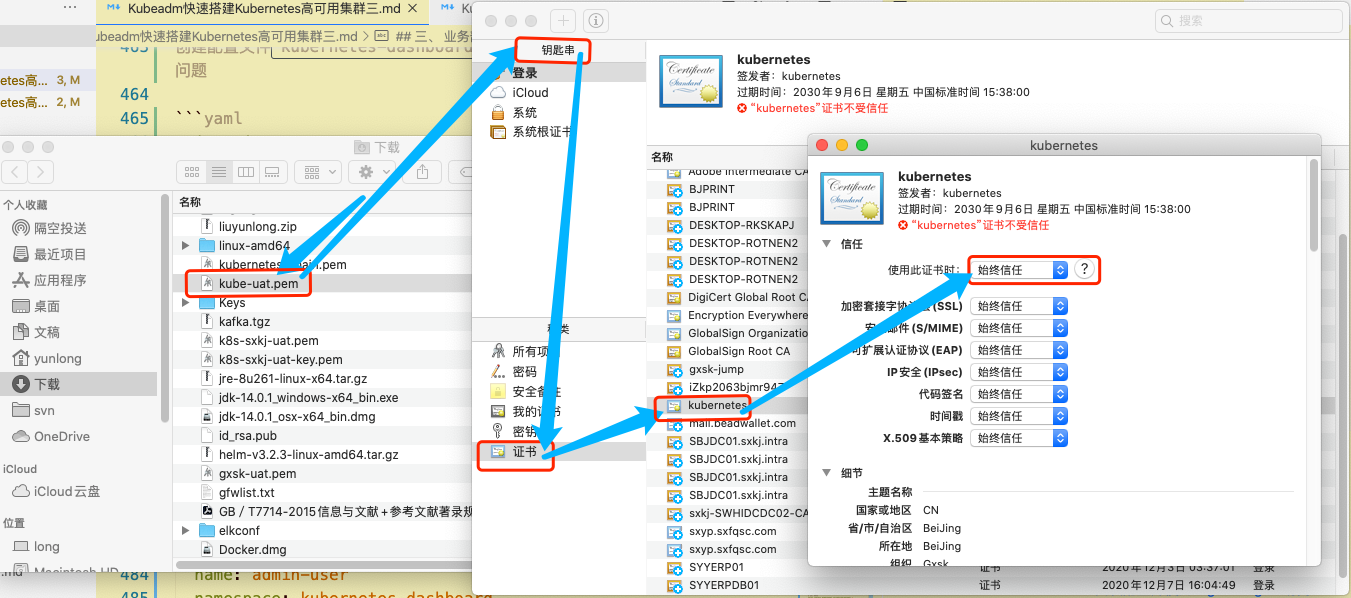

4.4.4 证书应用

下载上面生成的 ca 证书 kube-uat.pem 文件到本地,并安装证书,Windows 系统将证书安装至受信任的根证书

MAC 系统在钥匙串中把证书设置为完全信任

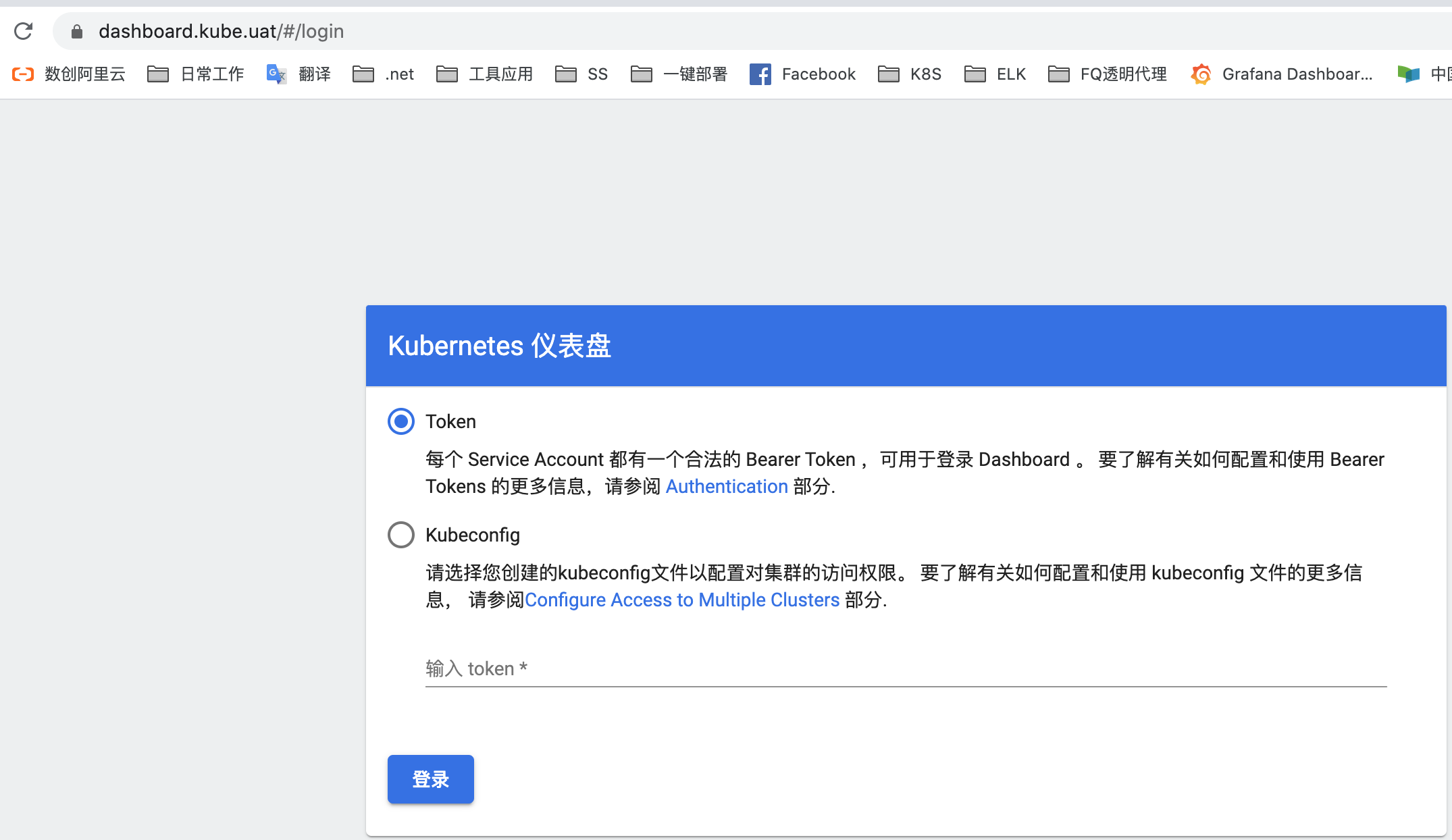

4.4.5 页面访问

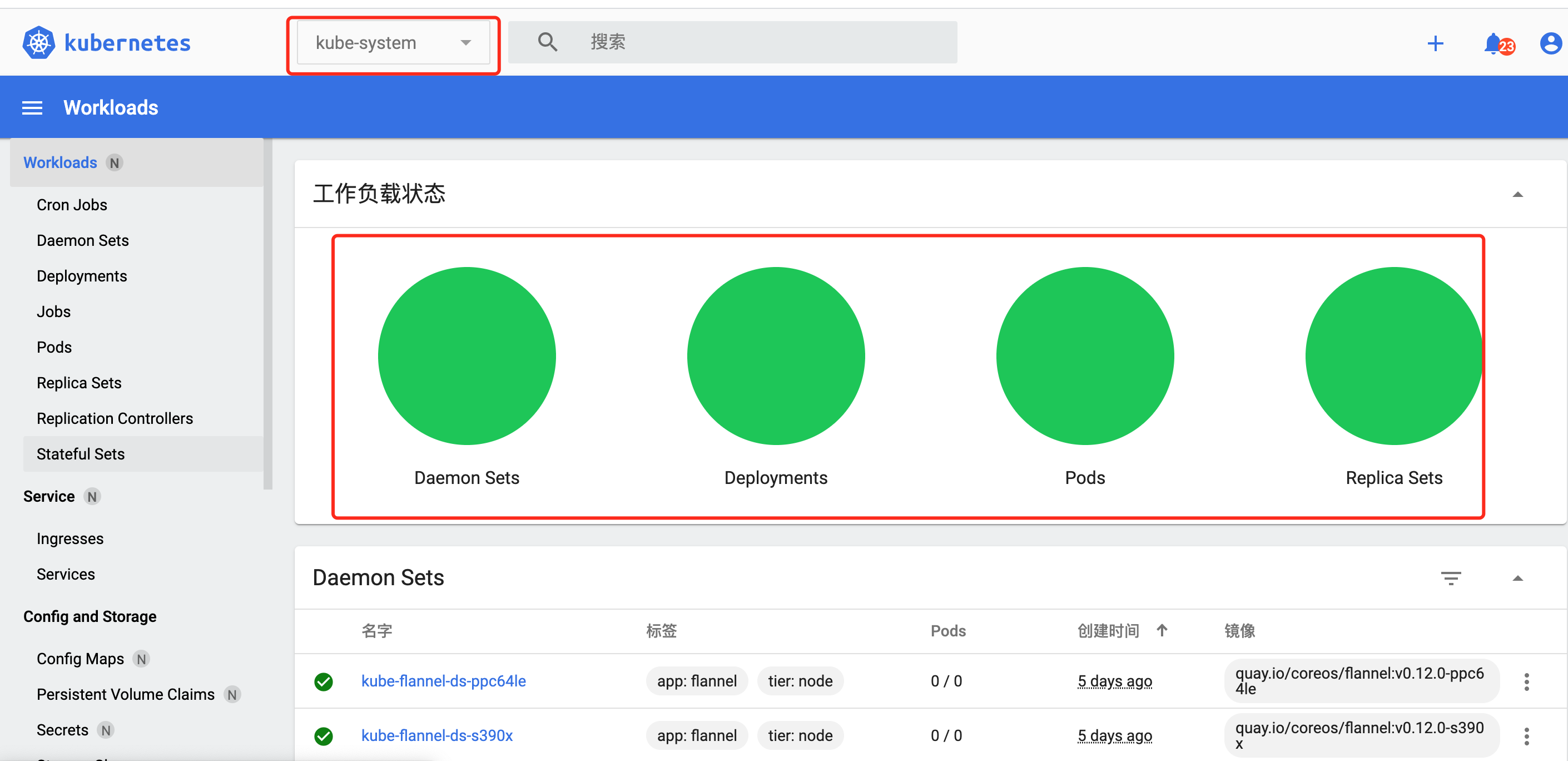

使用浏览器打开 https://dashboard.kube.uat, 显示如下页面,既为正常。

将登录选项选择到Token,将上面复制的 Token 串粘贴到里面,然后登录,即可查看内容了。

五、 文章引用

转载请注明来源,欢迎对文章中的引用来源进行考证,欢迎指出任何有错误或不够清晰的表达。可以在下面评论区评论,也可以邮件至 long@longger.xin